Research on pipe fitting identification and pose estimation based on deep learning

-

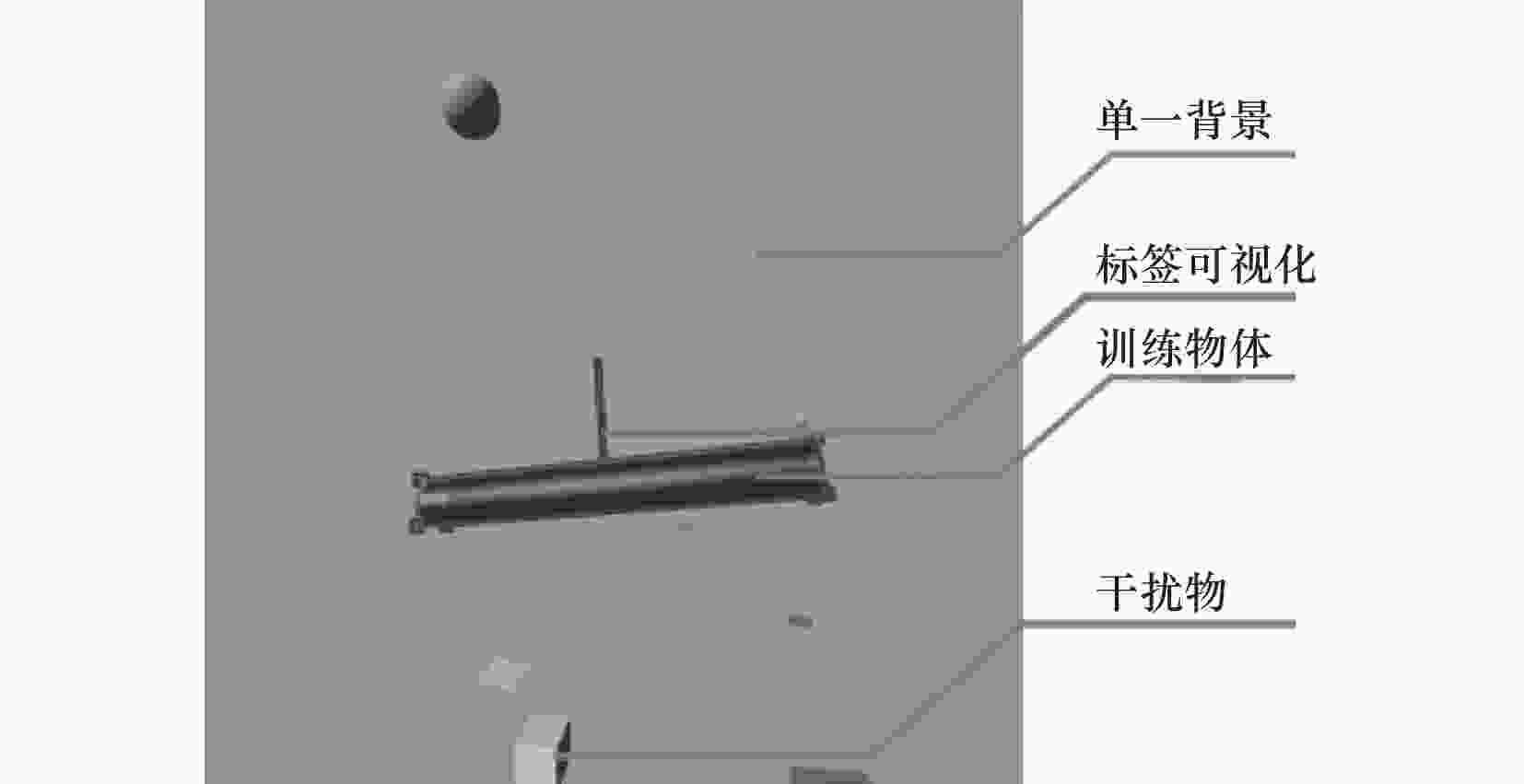

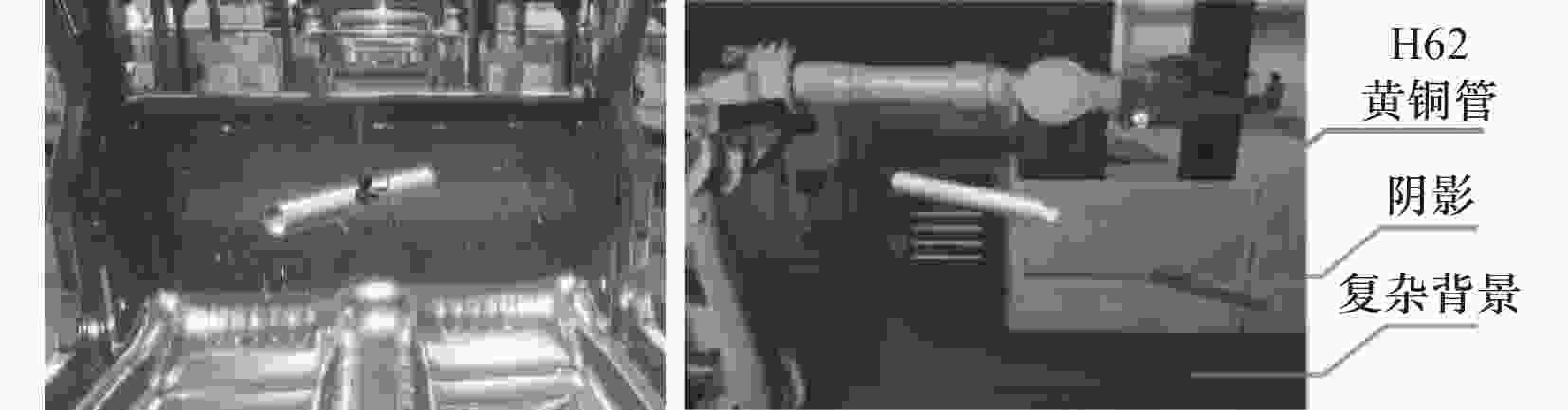

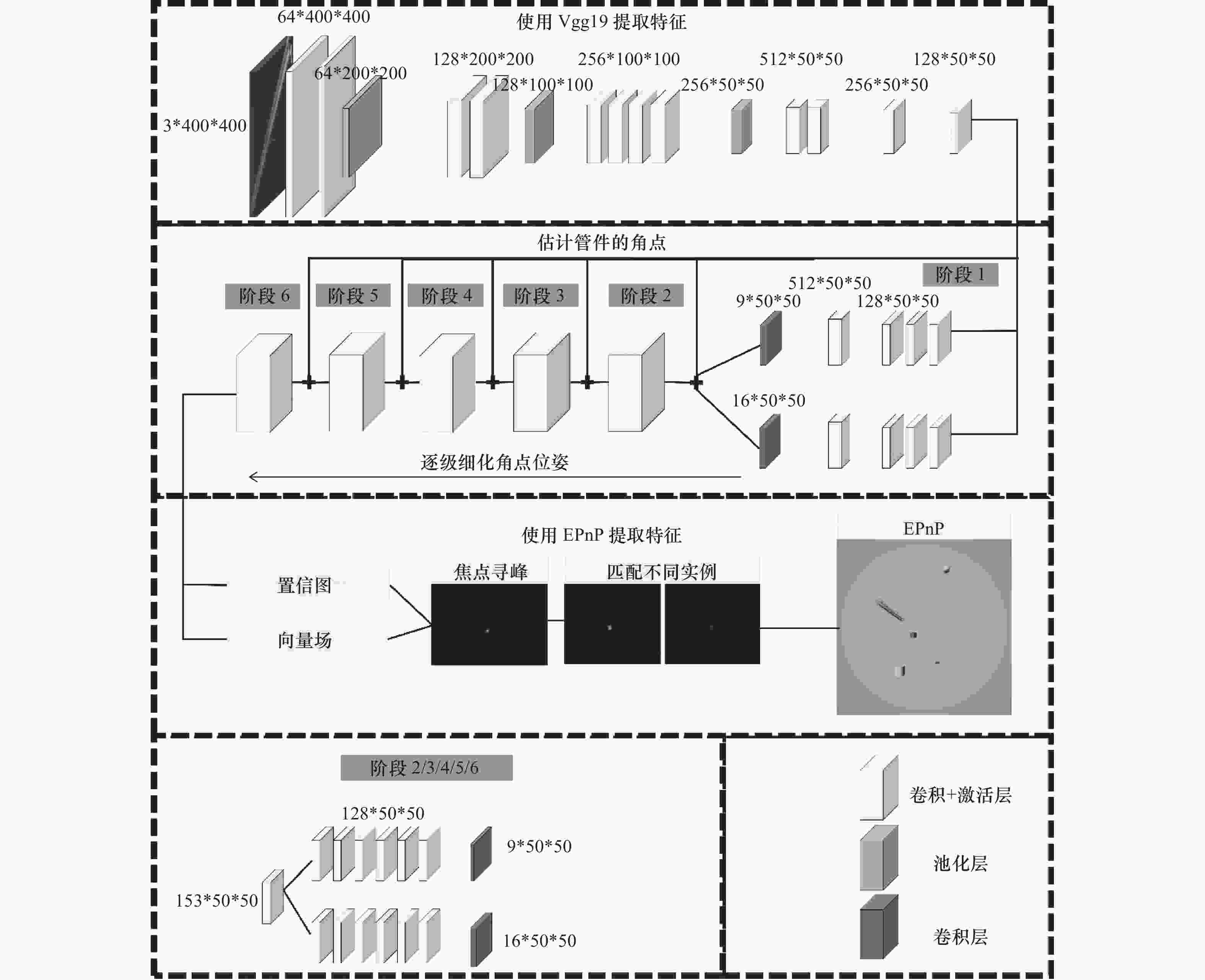

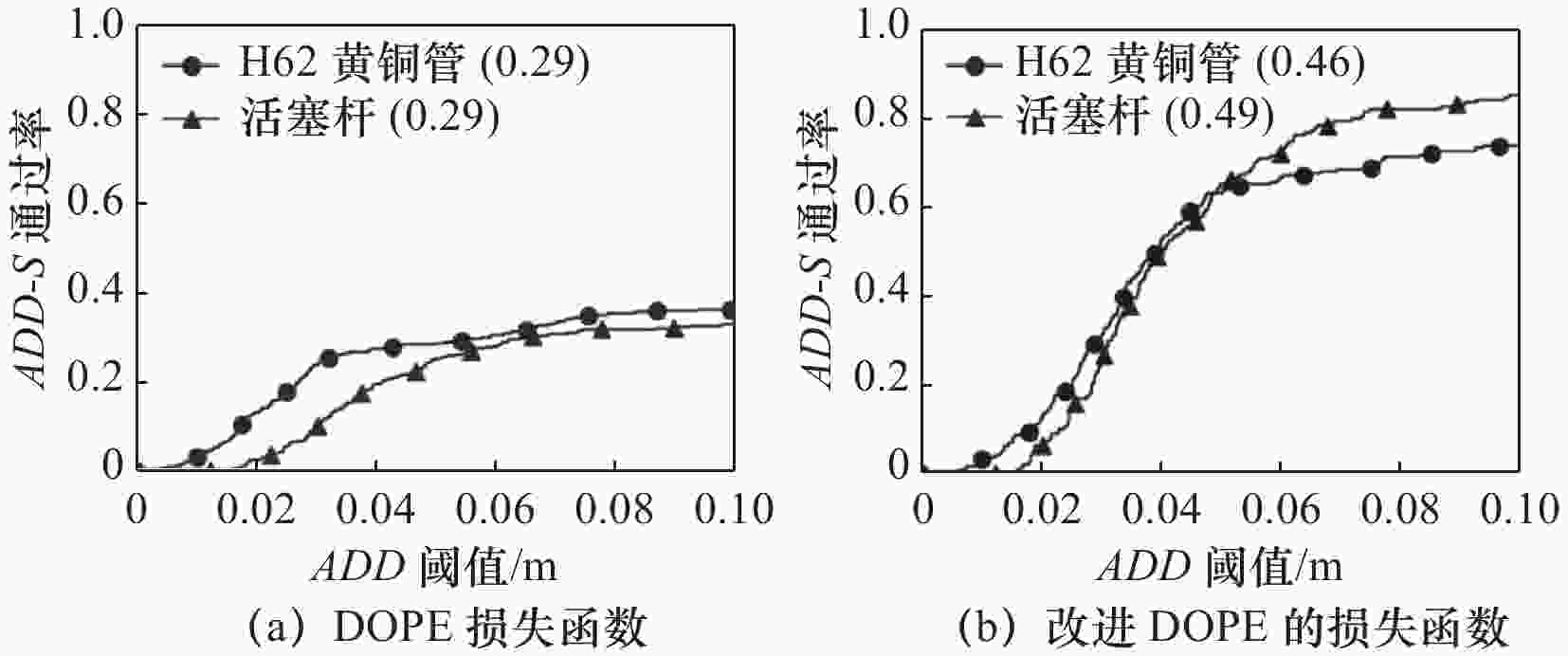

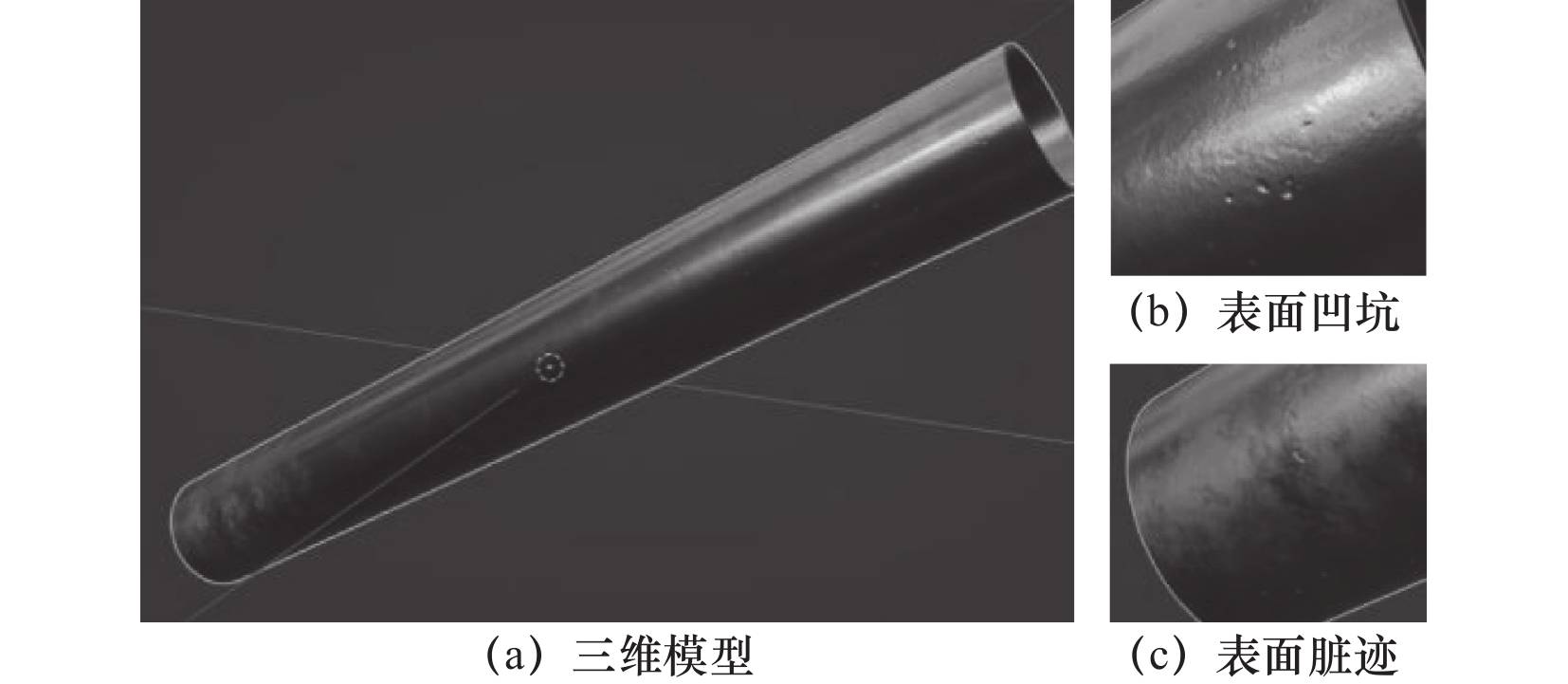

摘要: 管件的6D位姿估计是机器人抓取抛光的前提,传统的估计策略工作量大,基于深度学习提出改进的深度对象姿态估计(deep object pose estimation,DOPE)框架对管件实时检测。首先,制作合成数据训练网络。其次,对网络提出改进:针对管件的旋转对称性,自定义损失函数,提高管件检测精度;且采用Resnet18提取管件特征,减轻网络规模。最后,探究热图阶段数对推理时间的影响。改进后的DOPE网络估计管件位姿时,其精度-阈值曲线下面积(area under the curve,AUC)提高了17%,参数量和浮点计算量分别减少9%和20%,检测单张图片仅需102 ms。估计管件位姿试验证明了改进DOPE的有效性,且满足工业要求。Abstract: The 6D pose estimation of pipe fitting is the premise of robot grasping and polishing.Heavy workload is required for traditional estimation strategies.Based on deep learning,an improved deep object pose estimation (DOPE) framework was proposed for real-time detection pipe fitting. First of all, a synthetic dataset was created to train the network. In the next place, some suggestions were put forward: in order to solve the symmetry of pipe fitting and improve the detection accuracy of pipe fitting, a custom loss function was proposed. The network size has been reduced by using resnet18 to extract pipe fitting features. In the end, the effect of the number of heatmap stages on reasoning time was explored. The AUC is 17% higher, the number of parameters and floating point operations are 9% and 20% lower, and it only takes 102 ms to detect a picture when using the improved DOPE to estimate the pipe fitting pose. The effectiveness of modified DOPE was proved by the test of estimated pipe fitting pose, and it also meet the industrial requirements.

-

Key words:

- pipe fitting /

- pose estimation /

- DOPE /

- deep learning

-

表 1 Resnet18前13层网络结构

模块名 卷积1 卷积2 卷积3 卷积4 残差

模块7$ \times $7, 64,

S = 2$ \left\{\begin{array}{c}3\times 3,64\\ 3\times 3,64\end{array}\right\}\times 2 $ $ \left\{\begin{array}{c}3\times 3,128\\ 3\times 3,128\end{array}\right\}\times 2 $ $ \left\{\begin{array}{c}3\times 3,256\\ 3\times 3,256\end{array}\right\}\times 2 $ 输出

尺寸200 $ \times $ 200 200 $ \times $ 200 100 $ \times $ 100 50$ \times $ 50 表 2 不同特征提取模块试验结果

AUC 参数量/M FLOPs/G 推理速度/ms H62黄铜管 活塞杆 Vgg19 0.46 0.49 50.26 155.65 248 Resnet18 0.49 0.48 46.00 124.90 203 Resnet34 0.50 0.50 51.39 145.58 241 表 3 采用resnet18提取特征时不同阶段数试验结果

10 000张

(数据集大小)AUC 参数

量/MFLOPs/G 推理

时间/msH62黄

铜管活塞杆 Resnet 18 + 2个阶段 0.46 0.46 12.48 41.09 102 4个阶段 0.49 0.45 29.24 82.99 155 6个阶段 0.49 0.48 46.00 124.90 203 -

[1] 潘齐欣, 唐型基. 基于步进电机控制的仿人机械手臂抓取移动系统设计[J]. 科技通报, 2016, 32(3): 118-121. doi: 10.3969/j.issn.1001-7119.2016.03.027 [2] 乔景慧, 李岭. 基于机器视觉的电视机背板检测及自适应抓取研究[J]. 工程设计学报, 2019, 26(4): 452-460. doi: 10.3785/j.issn.1006-754X.2019.04.011 [3] 孔令升, 崔西宁, 郭俊广, 等. 基于时域编码结构光的高精度三维视觉引导抓取系统研究[J]. 集成技术, 2020, 9(2): 38-49. doi: 10.12146/j.issn.2095-3135.20200110001 [4] 黄胜, 冉浩杉. 基于语义信息的精细化边缘检测方法[J]. 计算机工程, 2022, 48(3): 204-210. doi: 10.19678/j.issn.1000-3428.0060230 [5] 李健, 赵丽华, 何斌. 回转体形状恢复与位姿估计方法[J]. 科学技术与工程, 2020, 20(11): 4458-4463. doi: 10.3969/j.issn.1671-1815.2020.11.037 [6] 陈十力. 基于机器人视觉的钢板件检测、位姿估计与抓取[D]. 广州: 广东工业大学, 2019. [7] 李少飞, 史泽林, 庄春刚. 基于深度学习的物体点云六维位姿估计方法[J]. 计算机工程, 2021, 47(8): 216-223. doi: 10.19678/j.issn.1000-3428.0058768 [8] He K M, Zhang X Y, Ren S Q, et al. Deep residual learning for image recognition[C]. 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2016: 770-778. [9] 眭海刚、黄立洪、刘超贤. 利用具有注意力的Mask R-CNN检测震害建筑物立面损毁[J]. 武汉大学学报:信息科学版, 2020, 45(11): 9-17. [10] 王太勇, 孙浩文. 基于关键点特征融合的六自由度位姿估计方法[J]. 天津大学学报:自然科学与工程技术版, 2022, 55(5): 543-551. [11] Tremblay J, To T, Sundaralingam B, et al. Deep object pose estimation for semantic robotic grasping of household objects[J]. Conference on Robot Learning (CoRL), 2018. [12] Tobin J, Fong R, Ray A, et al. Domain randomization for transferring deep neural networks from simulation to the real world[C]. 2017 IEEE/RSJ international conference on intelligent robots and systems (IROS), 2017: 23-30. [13] Simonyan K, Zisserman A. Very deep convolutional networks for large-scale image recognition[J]. Computer Science, 2014. [14] Xiang Y, Schmidt T, Narayanan V, et al. Posecnn: A convolutional neural network for 6d object pose estimation in cluttered scenes[J]. arXiv preprint arXiv: 1711.00199, 2017. -

下载:

下载: