Research on the recognition and localization method of small mechanical parts based on improved U-Net

-

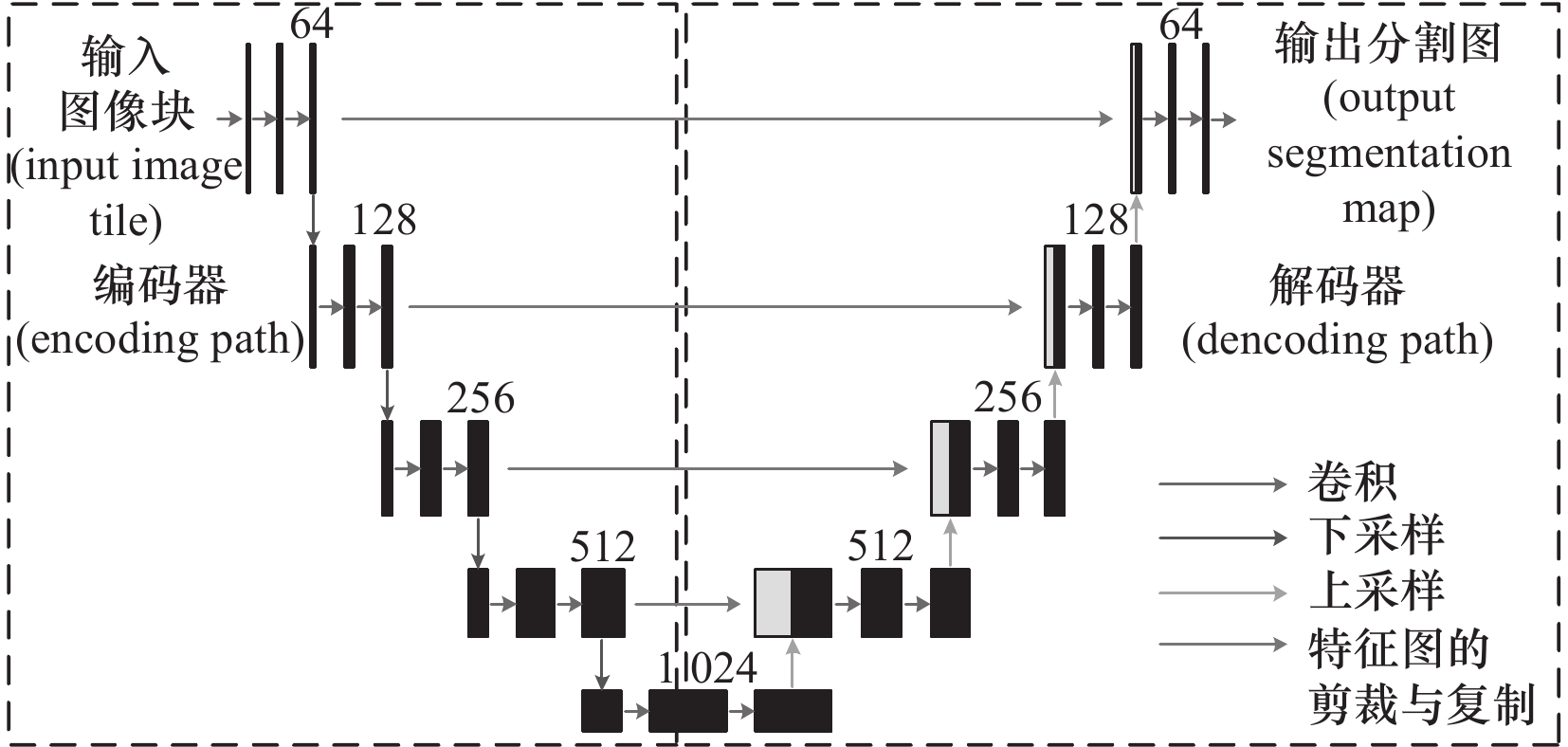

摘要: 针对基于机器视觉的小型机械零件识别速度慢、定位不精确等问题,文章提出一种改进U-Net(improve U-Net,IU-Net)和最小外接矩阵(minimum bounding rectangle,MBR)结合的小型机械零件识别和定位方法(IU-Net-MBR)。首先,搭建视觉分拣试验平台,制作小型机械零件数据集;其次,为了提高特征提取效率,将U-Net的特征提取网络替换成轻量级MobilenetV2网络,降低模型的参数和计算量;然后,为了提高U-Net的分割精度和鲁棒性,在网络结构中引入SE(squeeze and excitation)注意力模块;最后,使用最小外接矩阵得到零件的长宽基本参数,实现零件的识别和定位。试验表明,IU-Net相对于U-Net在平均交并比Miou(mean intersection over union)和像素准确率PA(pixel accuracy)分别提高4.39%和3.82%。在处理图像时,IU-Net相对于U-Net速度提升76.92%。与主流分割模型相比,IU-Net实现了更好的分割效果,有效地提高了小型机械零件的分割精度。在抓取试验中,IU-Net-MBR在识别率和抓取率上分别达到了100%和96.67%。Abstract: Aiming at the problem of slow recognition and inaccurate localization of small mechanical parts based on machine vision, this paper proposes a method of recognition and localization of small mechanical parts by combining Improve U-Net (IU-Net) and minimum bounding rectangle(IU-Net-MBR). Firstly, a visual sorting test platform is built to produce a data set of small mechanical parts.Secondly, in order to improve the feature extraction efficiency, the feature extraction network of U-Net is replaced by a lightweight MobilenetV2 network, which reduces the parameters of the model and the amount of computation.Then, in order to improve the segmentation accuracy and the robustness of the U-Net, the SE (squeeze and excitation) attention module.Finally, the length and width basic parameters of the parts are obtained using the minimum outer connection matrix to realize the part identification and localization. The experiments show that IU-Net improves 4.39% and 3.82% in mean intersection over union (Miou) and pixel accuracy (PA) relative to U-Net. In processing images, the speed of IU-Net is improved by 76.92% relative to U-Net. compared to mainstream segmentation models, IU-Net achieves better segmentation results and effectively improves the segmentation accuracy of small mechanical parts. In the grasping test, IU-Net-MBR achieves 100% and 96.67% in recognition rate and grasping rate, respectively.

-

表 1 试验环境

硬件环境 操作系统 集成开发环境 软件环境 NVIDIA Titan GPUs Ubuntu 20.0 PyCharm Pytorch1.7.1 表 2 模型Miou与PA对比

模型 Miou/

(%)PA/

(%)运算速度/

(f /s)FLOPs/

(×106)Params/

MBU-Net 76.02 91.61 7.62 31.12 124.62 DeepLabv3 79.77 93.43 24.24 38.76 100.17 PSP-Net 71.01 90.17 20.38 33.15 132.55 FCN 68.77 80.05 21.97 19.62 32.59 IU-Net 80.41 95.43 33.39 6.39 25.41 表 3 部分器材型号

名称 型号 单目相机 BFS-U3-89S6C-C型CCD工业相机 机械臂 Dobot Magician机械臂 表 4 抓取试验结果

类别 成功率(成功数/总数)/(%) 抓取率 96.67(29/30) 识别率 100 (30/30) -

[1] 鲁晟燚. 基于机器视觉的机械零部件识别与分拣技术研究[D]. 宁波:宁波大学,2023. [2] 梁鹏科. 基于深度学习的手术器械图像识别及分拣研究[D]. 秦皇岛:燕山大学,2022. [3] 杨迪. 输电线路带电作业机器人的螺栓视觉搜索识别方法研究[D]. 长沙:长沙理工大学,2017. [4] 吴冬,王东鹤,丁庆伟,等. 基于Canny边缘检测和轴线矢量的靶场目标姿态测量[J/OL]. 中国测试:1-82023-09-13]. [5] 董阳,于洪鹏,台立钢,等. 基于最小外接矩形的目标工件定位算法[J]. 机械工程师,2022(12):21-26. [6] 方路平,何杭江,周国民. 目标检测算法研究综述[J]. 计算机工程与应用,2018,54(13):11-18,33. [7] 刘丽,匡纲要. 图像纹理特征提取方法综述[J]. 中国图象图形学报,2009,14(4):622-635. [8] 周飞燕,金林鹏,董军. 卷积神经网络研究综述[J]. 计算机学报,2017,40(6):1229-1251. [9] 邵剑飞,魏榕剑,温剑,等. 融合SE和多尺度卷积的轻量级新冠肺炎分类模型[J/OL]. 云南大学学报:自然科学版:1-82023-10-11]. [10] Liu C H,Lin W R L,Feng Y F,et al. ATC-YOLOv5:Fruit appearance quality classification algorithm based on the improved YOLOv5 model for passion fruits[J]. Mathematics,2023,11(16). [11] 王二浩. 基于改进U-Net模型的路面裂缝智能识别[J]. 信息技术与信息化,2023(7):208-212. doi: 10.3969/j.issn.1672-9528.2023.07.052 [12] 陈逢军,吕继阳,胡天,等. 基于U-Net网络的柱面透镜视觉定位策略[J]. 中国机械工程,2023,34(5):505-514. [13] 曹宇,张庆鹏. 基于深度学习的汽车保险片识别插接研究[J]. 制造技术与机床,2020(12):138-141. [14] 唐禹,方凯,杨帅,等. 改进YOLOv5的气缸盖锻件缺陷视觉检测方法研究[J]. 制造技术与机床,2023(8):166-173. [15] 马祥祥,王琨,羊波,等. 基于改进SSD算法的物流物品检测[J]. 自动化与仪器仪表,2023(7):63-68. doi: 10.14016/j.cnki.1001-9227.2023.07.063 [16] 郭松,尹明臣. 基于MobileNetV2的自然场景口罩检测算法[J]. 传感器与微系统,2023,42(9):137-140. [17] Tan M,Chen B,Pang R,et al. MnasNet:Platform-Aware Neural Architecture Search for Mobile[C]. 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR),2019:2815-2823. [18] Zhang X,Zhou X,Lin M ,et al. ShuffleNet:An extremely efficient convolutional neural network for mobile devices[C]. 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition(CVPR),2017:6848-6856. [19] Zoph B,Vasudevan V,Shlens J ,et al. Learning transferable architectures for scalable image recognition[C]. 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR),2018:8697-8710. [20] Prasetyo E, Purbaningtyas R, Adityo R D, et al . Combining mobileNetV1 and depthwise separable convolution bottleneck with expansion for classifying the freshness of fish eyes[J]. Information Processing in Agriculture,2022 ,9 (4 ):12 .Prasetyo E,Purbaningtyas R,Adityo R D,et al. Combining mobileNetV1 and depthwise separable convolution bottleneck with expansion for classifying the freshness of fish eyes[J]. Information Processing in Agriculture,2022,9(4):12.[21] 毛远宏,贺占庄,刘露露. 目标跟踪中基于深度可分离卷积的剪枝方法[J]. 西安交通大学学报,2021,55(1):52-59. [22] Sandler M,Howard A,Zhu M,et al. MobileNetV2:Inverted residuals and linear bottlenecks[C]. 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition,2018:4510-4520. [23] Jie H,Li S,Gang S,et al. Squeeze-and-excitation networks[C]. IEEE,2017. [24] 朱张莉,饶元,吴渊,等. 注意力机制在深度学习中的研究进展[J]. 中文信息学报,2019,33(6):1-11. [25] Chen L C,Papandreou G,Kokkinos I,et al. Semantic image segmentation with deep convolutional nets and fully connected crfs[J]. Computer Science,2014(4):357-361. [26] 柴华彬,严超,邹友峰,等. 利用PSP Net实现湖北省遥感影像土地覆盖分类[J]. 武汉大学学报:信息科学版,2021,46(8):1224-1232. [27] Long J,Shelhamer E,Darrell T.Fully convolutional networks for semantic segmentation[C]. Proceedings of International Conference on Computer Vision and Pattern Recognition,2015:3431-3440. -

下载:

下载: